Imagine it’s 2042. And your day doesn’t start with a screen. It starts with you.

Your system wakes you, not with an alarm, but with a gentle vibration at your wrist. It knows you’re sensitive to noise in the morning, so, the room responds with a gradual shift in light. You’re greeted by the faint scent of „spring rain“, a personal anchor for calm focus.

As you move through the morning, your environment adapts. The interface that supports your work doesn’t open by default, it checks your readiness. If your sleep was shallow or your heart rate still tense, it begins in “quiet mode.” Muted tones. Fewer options. A soft voice, asking: “Shall I hold your meetings until 10?”

This isn’t a vision about productivity. It’s a story about technology that senses how you are and responds with respect.

What are neuroadaptive interfaces?

When I speak of neuroadaptive systems, it’s easy to imagine headsets reading your thoughts and turning them into commands. That exists in labs, in early-stage BCIs, in speculative futures. And while it’s fascinating, it’s not the world I am describing here.

Neuroadaptive interfaces, as I understand them, don’t aim to control systems by thinking. They aim to sense, adjust, and resonate. Not by knowing your intention. But by understanding your state. Your level of focus. Your rhythm of attention. The emotional texture behind the click.

These are not mind-reading machines. These are systems that respond like good listeners — not by taking over, but by supporting better timing, clarity, and presence.They are systems that adjust in real time based on your cognitive, emotional and physiological signals. They listen not just to clicks, but to:

- your eye movement and blink rate

- the Micronesian in your face

- the depth of your breath

- the rhythm of your gestures

- the subtle pause before you answer

And in turn, they adapt: What they show, how they sound, when they ask, and even how they feel. This is no longer User-Interface Interaction in classical senses of UX. This system resonance is HX – Human Experience.

If you work data and decisions

White collar, office worker, decision-makers, managers.

You spend your dnitive load and reduce noise when you drift. They suggest visual simplifications without dumbing things down. If you become overconfident, they gently flag past cases where similar signals led to error. A faint tone. A subtle border shift. Some teams even use scent profiles to support different thinking states — citrus for focus, vetiver for grounding. The system doesn’t distract. It keeps you connected to yourself.

If you work in high-stress environments

Pilots. Dispatchers. Emergency teams. ICU nurses.

In 2042, your interface reads your tension — and responds. Critical alerts adjust based on your breath and heart variability. When your focus starts to fragment, interface elements pulse slightly to refocus attention. Lighting adapts to stress cycles. Background noise is filtered based on auditory load. Sometimes, just for a moment, everything pauses. Not to freeze, but to give you space. The goal isn’t calm. The goal is clarity under pressure.

If your job is physical and fast

You operate machines, move, carry, build, react.

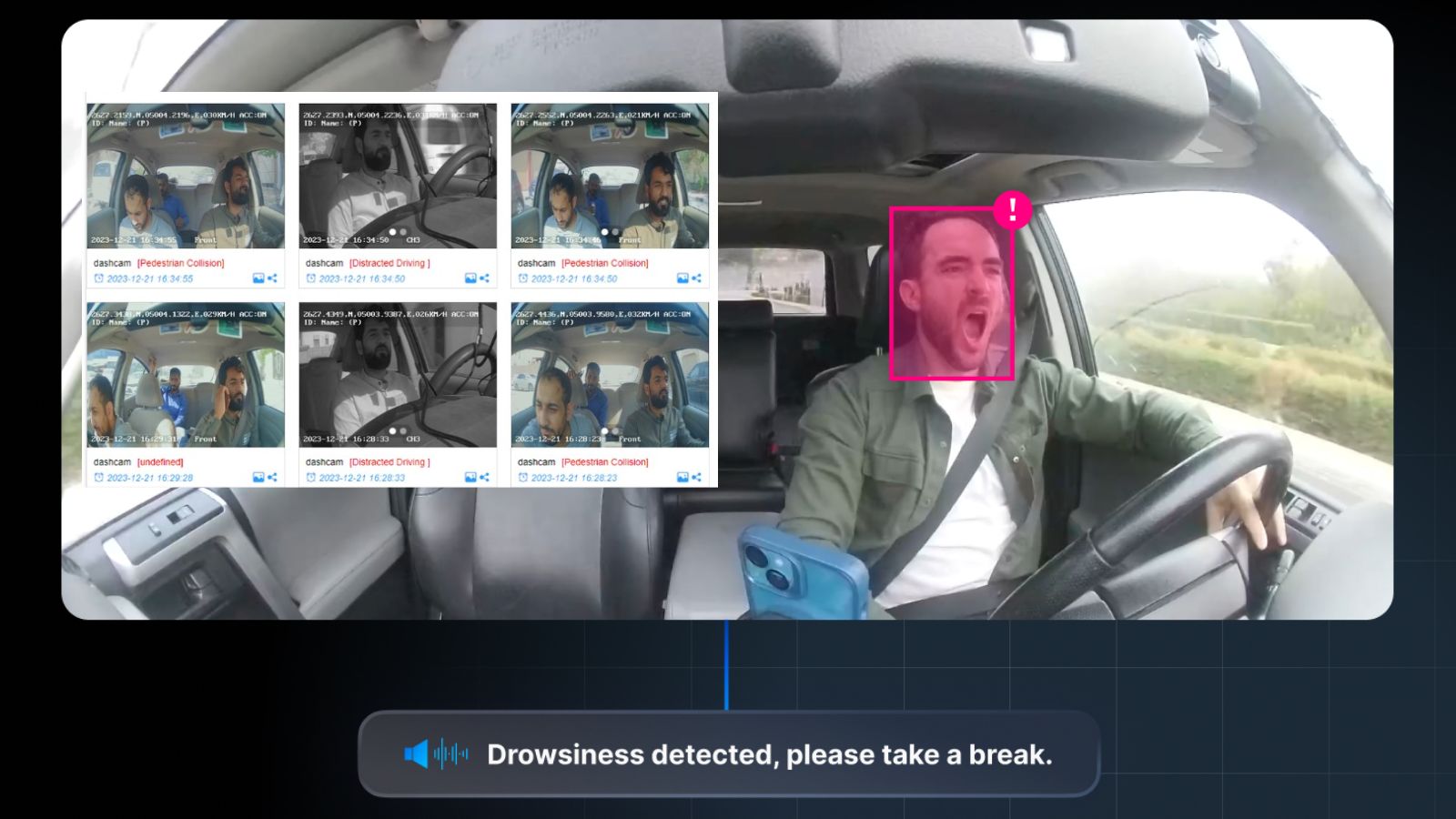

Your system knows when your motions start to lose symmetry. Fatigue detection prompts rest or activates safety constraints before you notice. Forklifts shift torque curves based on your micro-adjustments. Your environment responds with airflow modulation and visual compression when your body signals overload. You don’t need to log your stress. You wear it and your system listens.

If you’re a consumer

Booking a trip. Finding a recipe. Getting through your inbox.

Your interface senses overwhelm — and shifts mode. Menus collapse. Audio guides offer a slower pace. When joy rises, the system subtly opens new paths. In immersive experiences, scent and haptics shape transitions — not as gimmick, but as sensory alignment. You don’t have to choose settings. You just have to show up.

So what’s already here? (Reality check: 2025)

This future is not fiction. It’s just unevenly distributed. Some of it is in labs. Some in commercial pilots. Some in your wrist.

Today’s landscape:

- Eye tracking in AR/VR and simulator UX

- Emotion-sensitive voice interfaces in automotive and support

- Wearables like Muse and Empatica for focus, stress, recovery

- Adaptive soundscapes (e.g. Endel, Calm, Muse)

- BCI prototyping: Neurable, NextMind, Neuralink

- Haptic feedback in gloves, chairs, steering wheels

- Early scent interfaces in retail, mindfulness, immersive design

- Gesture recognition in AR, healthcare, public interfaces

It’s still raw. Sometimes clunky. But directionally very real.

Compact Edge‑AI hardware powering local intelligence making sensors smart without cloud latency.

The shift isn’t technical. It’s cultural.

Shifting from the dead glass interface of a screen to the living interface of bodies: We’ve spent decades designing for screens, clicks, efficiency. But presence? Emotion? Readiness? These have no pixel. No dropdown menu. No toggle.

Shifting from a product lead usability to a dynamic human lead experience: Neuroadaptive systems aren’t about doing more. They’re about doing things when you’re ready for them. And in ways that don’t overwhelm your nervous system.

Shifting from influencing the consumer by technology to influencing technology by the consumer: Many, maybe most, multisensoric experiences today try to fool consumers into specific behaviors. Buy more. Stay longer. Be happy. Just imagine how much more pleasant a day becomes, when your mood sets the tone. Freedom of feelings rediscovered.

Designing for resonance, not reaction

Let’s return t the initial thought: This is not the future of productivity. This is the future of dignity in digital systems. It means building tools that support people in their full state, not just their to-do list. It means sensing when to speak, and when not to. It means designing interfaces that smell like spring, pause when needed, and feel less like machines and more like someone who cares.

That’s not too much to ask. Actually, in 2042, it will be the minimum. As humans evolve with technological capabilities. And with more mature users and consumers comes higher standards for technology. It’s evolution, baby!

So trust your guts and rub those hands for a nice smelling future.

All product images are used for editorial and educational purposes only.

Sources (left to right, top to bottom):

Wheelwhisperer.com, AVL-ksa.com, BMWBlog.com, Mi.com, IEC (etech.iec.ch), Mikeshouts.com, Sennheiser.com, Meta (about.fb.com), Jaycon.com

All rights remain with the original publishers.

If you are a rights holder and would like to suggest a correction or removal, please contact us.

Driver Drowsiness Alert System (Coffee Icon) Image courtesy of WheelWhisperer.com – used for editorial purposes only.🔗 Source

Facial Monitoring System for Driver Fatigue Image courtesy of AVL-KSA.com – used for editorial purposes only.🔗 Source

BMW Gesture Control System Image © BMWBlog.com – used for editorial reference.🔗 Source

Xiaomi Smart Scent Diffuser Image © Xiaomi – used for non-commercial editorial illustration.🔗 Source

Brain-Computer Interfaces(BCI) Visual Image courtesy of IEC etech.iec.ch – used with credit for educational purposes.🔗 Source

Woojer Edge Haptic Wearable Image © mikeshouts.com – used for editorial reference under fair use.🔗 Source

Sennheiser TC ISP Intelligent Sound Platform Image © Sennheiser – used for editorial purposes only with attribution.🔗 Source

Meta Orion AR Glasses Prototype Image © Meta / about.fb.com – used for informational purposes only.🔗 Source

Edge AI Hardware (Jaycon) Image inspiration of Jaycon.com – used for educational illustration under fair use.🔗 Source